“Validate just sounds like you make sure it works, right.” Not quite.

The Validation phase of the UX Process confirms that the designed solution works for the user, and that our understanding of the user and the request was accurate. If I’m being hypercritical, the terms “test,” “research,” “study,” or “examine” would be more accurate. “Validate” implies that you’re just trying to make sure your assumptions are correct, and not that you have to play devil’s advocate to ensure that it can’t be improved upon further.

This is part three of our three-part blog series diving deeper into the phases of the UX process, and how that process helps bring better solutions to more users,faster.

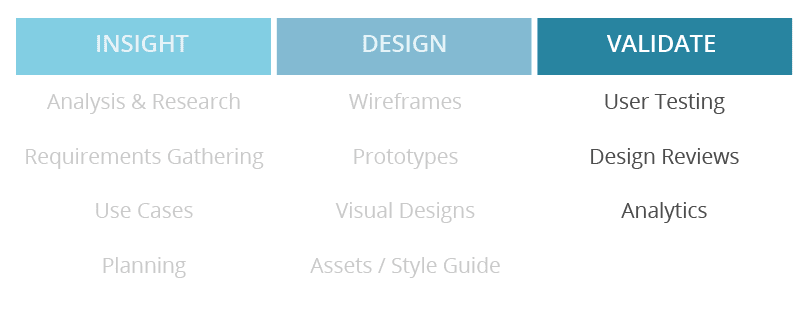

A typical user experience lifecycle includes three primary phases: Insight, Design, and Validate. In this blog, we’ll be talking about the validation phase, and how it shapes the rest of the process.

The main purpose of this phase in the process is to test with real users and realistic scenarios that your understanding of the problem was accurate, and that the proposed solution works for the users needs.

Very similar to the Insight phase, you test whether or not a designed solution is useful for real users. You want to put it in front of them and give them guidelines so they can provide you useful feedback. This video from the Neilson Norman Group (leaders in the usability industry) gives a great overview of how simple it is to get quality feedback with the least amount of effort.

When testing design solutions you’ll typically want to do the following:

Prototype

It doesn’t have to be a built-out demo, but that doesn’t hurt. A prototype is the most accurate way to illustrate and demonstrate how the end product will function, and leaves the least room for imagination to cause communication issues. (ie: beta site with demo functionality or use an interactive prototype like InVision)

You’ll have identified the right type of users to include in the prototype test, and have recruited them similar to the recruiting steps within the Insight phase. They may even be the same users as a way to follow through with the feedback they gave when you were collecting research. Typically 5-7 users per test are enough to get adequate information that reflects accurate overall numbers. Anything over 7 tends to just yield the same comments, and gets repetitive.

Test Scripts

You’ll need to flesh out what the goals of the solution are, and how you plan to test the users by writing out a script for them to follow during the user test. It’s important to illustrate primary use case scenarios, with realistic data, and write the script in a way that you’re telling them what they need to do without telling them what to do. For example, if they need to find a specific claim on a new page, and reconcile it using a new drawer that appears, don’t write out “reconcile claim #100 within the new reconcile drawer.” Instead, calling out the action of “reconcile” and which claim number should be enough. If they can’t find a way to reconcile that claim from the screen on their own, then the design isn’t intuitive enough and will cause problems. If they’re able to easily find the action and use the new drawer without having to be guided, then congratulations! You’ve created an intuitive solution that helps the user and reduces the need for excessive training.

Metrics

As we mentioned in the first UX blog post of this series, UX Insight: Better Design By Users Like You, you’ll need to track metrics so there’s a measurable way to represent the success or failure of your design solution. Tracking errors, deviations, average times in order to complete the tasks from the script, and general success rate of completing the tasks are the basics that will help visualize usability with real numbers.

Questions & Unstructured Feedback

You don’t know what you don’t know. You can have rigorously vetted which users would be right for which user tests, and thoroughly covered all your known scenarios and personas, and yet… the real users can always surprise you at any moment. Make sure there’s a safe, and comfortable way for them to provide unstructured feedback to you during the test. They are the subject matter expert, and they’ve seen it all. They should feel comfortable enough to ask questions about the design and shed light on the unknowns so you can tackle them early. The user tests are as much an outlet for you to learn new things as it is to validate things you already knew.

Reporting

Once you’ve done your testing and recorded your metrics; analyze the information and present it in a comprehensive way to the stakeholders and other members of your team. Here’s where what you learned can influence the next stage of design and influence the next iteration of the Insight phase.

—

After all that, the cycle begins again. Based on user test results, you can tweak your design or release it, and keep the UX process going because, in reality, UX work is never truly “done.” It’s always changing to keep up with the users and adapt to the changing digital environment.

Read the 3-part blog series: INSIGHT – DESIGN – VALIDATE

Get #UXcited!